Speakers: Tim Brewer (CEO & Co-Founder, Functionly).

Tim Brewer isn’t a property manager. He’s a CEO, leadership advisor, and podcast host who has spent the last three years sitting inside some of the world’s largest organizations watching how leaders navigate change — and increasingly, how they’re navigating AI.

At Happy Summit 2026, he brought that outside-in perspective to a room full of multifamily operators, sharing five observations that he believes will determine who thrives in the AI era and who gets left behind.

This guide captures his five-point framework — practical, honest, and grounded in what’s actually happening at the front lines of AI deployment in organizations right now.

About the Speaker: Tim Brewer

Tim Brewer is CEO of Functionly, a DIY org design software. He hosts OrgDesign Podcast, a six-year-running series on how leaders are navigating organizational change.

The Framework: Five Things Tim is Noticing Right Now

Tim organized his talk around five observations from the front lines of AI deployment in major organizations over the past 12 months. Each one applies directly to property management leaders navigating the same transformation.

1 | Structure, Fragility, & Resistance to Change

Humans are wired to resist change as self-protection. Getting buy-in early is almost everything. Once someone enters survival mode, you've likely lost them. How you frame role changes determines whether people engage or shut down.

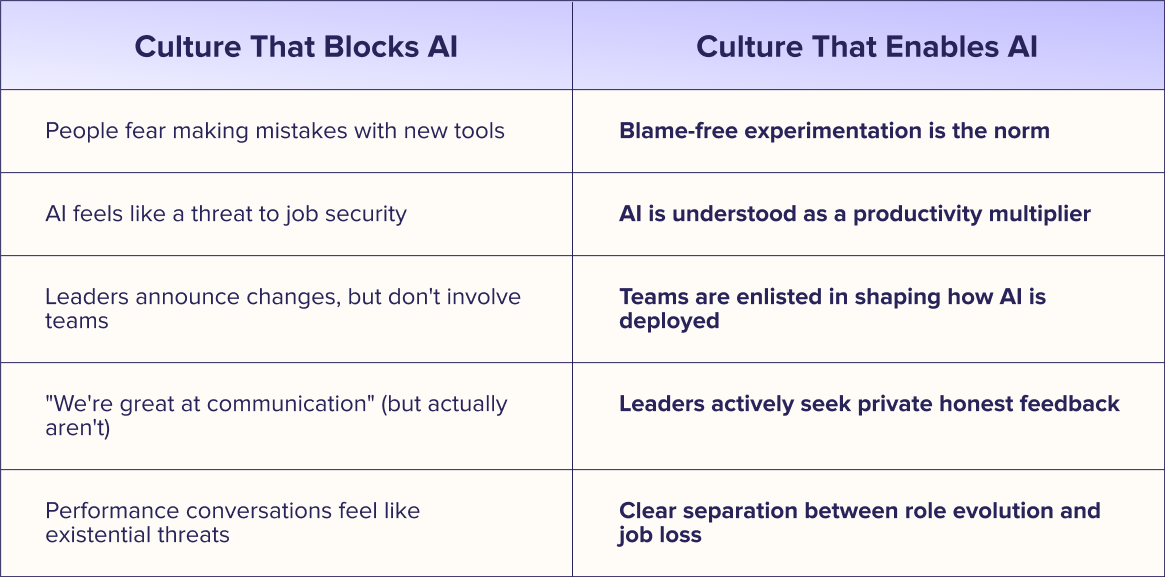

2 | AI Culture Eats AI Strategy for Breakfast

You can have the best AI deployment plan in the world. If your culture is lacking trust and psychological safety, the plan will fail. Culture is the operating system.

3 | Managing AI Agents Is a New Leadership Discipline

AI agents don't manage themselves. Managing AI agents is as demanding as managing people — and most leaders haven’t started learning how to do it yet. Start building those skills today.

4 | Your Data Is Your Advantage — or Your Mistake

Data quality and data protection will determine your AI outcomes. And your proprietary data is the one thing competitors can't replicate. Your data is either your greatest asset or your greatest mistake.

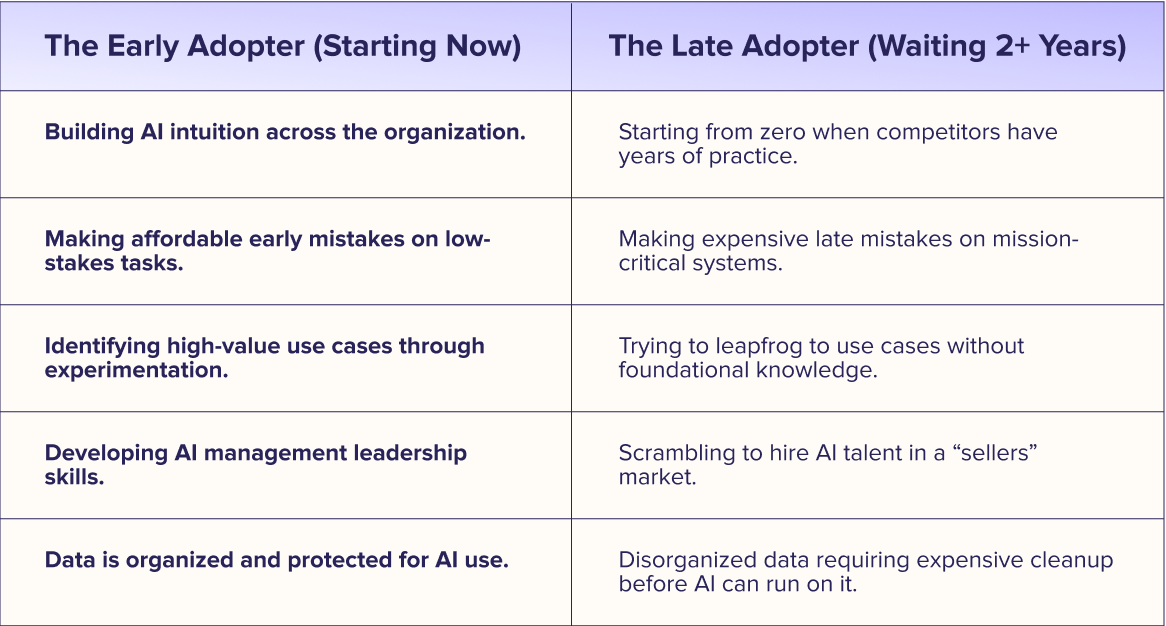

5 | The Compounding Returns of Early Adoption

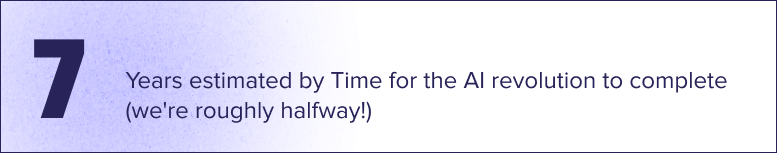

The AI revolution will take about seven years to complete. We are roughly halfway through. AI adoption is a compounding return. What you learn this year puts you not one year ahead — but exponentially ahead. Start now.

Getting Ahead of Change: How to Win Buy-In Before AI Resistance Starts

The first and most fundamental thing Tim has observed is also the most obvious one that organizations consistently fail to account for: humans do not like change. This isn't a character flaw or a management failure. It's biology. When people perceive a threat to their position, role, or identity, they enter a self-protective mode. And once someone is in that mode, getting them back into a change-ready state is extraordinarily difficult.

This matters enormously for AI deployment because AI almost always represents change — to roles, to workflows, and to what people spend their time doing. The instinct is to announce the change and manage the resistance afterward. Tim's observation is that this sequence is backwards.

Getting Buy-In Before the Resistance Starts

The organizations that navigate change well — AI or otherwise — get buy-in early. Not "we told people about this," but genuinely involving the people who will be affected in shaping how the change happens. There's a meaningful difference between informing people and enlisting them. The latter produces dramatically better outcomes.

“When someone goes into protecting themselves, it's nearly impossible to get them back in a change scenario. Getting buy-in early is everything.” — Tim Brewer | CEO & Co-Founder, Functionly

The “Hats” Framework: Roles Instead of Identities

One of the most practically useful tools Tim shared is a reframe for how people think about their work when roles change. The challenge with traditional job descriptions is that they become fused with personal identity. "I am the person who does X" becomes much harder to change than "I currently wear the hat that does X."

Tim’s practical solution is reframing how employees think about what they do. Instead of defining themselves by a job title — which creates identity attachment — encourage people to think of their work as a collection of roles, or “hats” they wear.

The "hats" framework offers a strategic advantage for AI adoption. By helping employees distinguish which tasks they would prefer to delegate to an AI agent versus those they wish to master, the transition shifts from a top-down mandate to a collaborative partnership.

This approach has a profound impact on AI deployment: when an automated agent takes over a task, framing it as the "movement of a hat" is significantly less intimidating than suggesting a role is being phased out. This perspective ensures the person remains valued while the work itself evolves, turning the implementation into a dialogue about strengths and energy rather than a struggle for professional survival.

“If you see those hats that you wear as roles, and those roles can move around the organization in a more streamlined way, it becomes a lot easier. I had a founder wearing four or five different hats. Two weeks later he realized he only wanted to wear one — and he started shifting the rest to others.” — Tim Brewer | CEO & Co-Founder, Functionly

Practical Application

Conduct a 'hats audit' with your staff before any major AI launch. Implementing the "hats" framework provides a strategic edge during AI integration. By allowing staff to identify which responsibilities they want to delegate to AI versus those they want to perfect, the process evolves from a forced directive into a joint effort and transforms the rollout into a discussion about energy and strengths instead of a fight for job security.

The #1 Reason AI Fails (It's Not the Tech)

Of all five observations, this one landed the hardest. Tim has spoken with leaders across some of the largest organizations in the world — and he’s watched countless well-funded, well-intentioned AI initiatives fail. The culprit is almost never the technology.

In most cases, the cause wasn't the strategy. It wasn't the tool selection. It wasn't the budget or the timeline. It was the culture.

“Even if you’re deploying AI in your organization and you have the best strategy in the whole planet about how you’re going to do it — if your culture is lacking, people don’t feel safe, there’s no amount of effort that’s going to change that. I have talked to endless leaders that have spent immense amounts of money, time, and resources and their AI deployments have failed. And a huge part of that is not the effort, money, or time they spent. It’s that the culture of the organization wasn’t in a good place to adapt.” — Tim Brewer | CEO & Co-Founder, Functionly

The success of any AI initiative hinges on one primary cultural factor: trust. Psychological safety allows team members to experiment without the shadow of fear. If employees harbor unspoken anxieties that AI is a precursor to job cuts, the most sophisticated AI strategy will inevitably falter due to this fundamental cultural barrier.

A lack of transparent expectations and a deficit of trust between leadership and employees are highly likely to lead to failed AI integrations. In these rapidly-evolving, high-uncertainty environments, the absence of a trusting foundation forces employees toward a defensive posture — resisting change and attempting to outlast the transition.

The Feedback Permission System

Tim illustrated the culture point with a simple practice he considers the most reliable differentiator between great leaders and average ones: the permission conversation.

The idea is this: as a leader, you proactively give your team explicit permission to give you feedback — privately, without judgment, and specifically when you're not meeting the stated values of the organization. Not general feedback. Not anonymous feedback. Personal, direct, private feedback on values alignment.

“I would say to my team: no matter your role, you have my permission to come to me privately — without telling the other ten people at those tables — and give me feedback when I’m not meeting the stated values of our organization. And I give you my word that I’ll take it on board, hear you out, and give it consideration. Any time.” — Tim Brewer | CEO & Co-Founder, Functionly

This one practice does several things simultaneously. It signals that the leader is genuinely committed to the values, not just to performing them. It creates a safety valve for real concerns before they become silent resentment. And it builds the kind of trust that makes rapid change — including AI adoption — possible.

The Trust-Speed Equation

High-trust organizations move through AI adoption faster. This is not a soft observation — it's a compounding advantage. Every point of trust you build today accelerates every change initiative you'll run in the next three years. Trust is infrastructure.

The New Manager: Leading Humans & Machines

Tim highlighted a unique perspective: while the traditional individual contributor (IC) role is fading, the actual work they perform is not. The evolution lies in how individuals interact with the execution of that work.

Addressing the common anxiety regarding AI-driven job loss, Tim suggests a more subtle shift. Although the conventional IC role — defined by task execution without management responsibilities — is transforming, the core work remains vital.

“I think in most organizations that role’s probably coming to an end. But it does not mean individual contributors will have no role in organizations. The tasks that add no value, or that no one really enjoys doing—those will increasingly be automated. What remains is the higher-value, engaging work that people actually want to do.” — Tim Brewer | CEO & Co-Founder, Functionly

Tasks that add low value, that nobody particularly enjoys, and are repetitive and rule-based will increasingly be automated or delegated to AI. The person who was doing those tasks doesn't disappear. They evolve into someone who manages and directs the agents doing those tasks, while focusing their own capacity on the higher-value work that genuinely requires human judgment.

Why Managing Agents is Harder Than it Looks

The default assumption is that deploying AI tools is like deploying email: roll it out, people adopt it, and productivity improves. Tim's observation is that this assumption is wrong in an important way.

AI agents aren't passive tools. They require direction, oversight, quality checking, and management. The skills that make someone a great people manager — clear delegation, quality standards, feedback loops, and knowing when to intervene — are the same skills required to manage AI agents effectively. And those skills take years to develop.

“All of those agents require management. It took Ben (President at HappyCo) 25 years to become a great people leader. You have to start today helping your people work out what managing agents looks like. It takes time, effort, intent, training, and practice.” — Tim Brewer | CEO & Co-Founder, Functionly

The implication for multifamily operators is concrete: agent management needs to be on your leadership development agenda now. Not when the agents arrive. Not when the workflows are deployed. Now. Because by the time agents are a core part of your operations, you want your leaders to already have a year or two of experience managing them.

For Leaders Now

Begin honing your agent management capabilities by assigning an AI agent a tangible task coupled with a defined quality standard. Evaluate its performance, offer direct feedback, and establish escalation protocols for when it misses the mark. Identifying the skills you lack in this process defines your immediate professional development agenda. Select a team member prepared to pilot AI agent management and entrust them with a specific workflow supported by these tools. By building a consistent feedback loop, you are treating this transition as a vital leadership investment. Delaying this puts you at a disadvantage, as forward-thinking organizations began this journey a year ago.

Is Your Data an Asset or a Liability in the Age of AI?

Most organizations treat data as a byproduct. Something that accumulates as a side effect of operations and gets queried occasionally for reports. Tim argues that this framing is outdated and, in an AI context, actively dangerous.

Proprietary organizational data — your operational patterns, your maintenance history, your resident behavior signals, and your vendor performance records — is one of the only things that will differentiate you from competitors as AI makes general knowledge infinitely available to everyone. When Claude or ChatGPT can answer almost any factual question instantly, what you know that others don't comes from what you've observed and recorded that others haven't.

The Determinism Problem

There's a technical dimension to the data quality argument as well. Everyone who uses AI tools notices that they don't give exactly the same answer twice. Ask the same question on different days, get slightly different responses. This variability is a feature in creative contexts and a liability in operational ones.

The path to deterministic AI — AI that produces consistent, reliable outputs for the same inputs — runs through data quality. When the data going in is clean, structured, and consistently organized, the outputs become more predictable. When it's messy, inconsistent, and siloed, the AI is unreliable.

“The outcome that everyone really would like is that AI is deterministic — when you run a process, it behaves the same way and the same outputs come in a consistent way. But the reality is AI today gives me a slightly different answer almost every single time. The key to getting to deterministic AI is actually the quality of the data you have in your organization. You need people in your organization who care deeply about how you structure and document data. That's not a tech role — it's a strategic one.” — Tim Brewer | CEO & Co-Founder, Functionly

The Public AI Data Risk

Tim also flagged a risk that is already materializing in organizations he works with: employees uploading sensitive company data to public AI tools. Proprietary processes, competitive strategies, client information, and financial data are all being fed into LLM interfaces that are trained on or exposed to that data.

Once your organizational secret sauce is out in the public cloud, it's effectively public. This isn't a hypothetical future risk — it's happening right now and within most organizations, because the employees using these tools don't think of it as a data breach. They think of it as using a productivity tool.

“I’ve seen countless incidents where people are uploading their company data and company processes to public instances of LLMs — and now your organization’s secret sauce is out in the cloud. You need to protect it.” — Tim Brewer | CEO & Co-Founder, Functionly

The Data Protection Impreventive

Every time a team member pastes internal operational data, client information, or proprietary processes into a public AI tool, that data potentially becomes part of that tool’s training. Establish explicit policies about what data can and cannot be uploaded to external AI platforms. Invest in controlled AI environments where your data stays within your organization's boundaries. Make data governance part of your AI adoption policy — not an afterthought.

The Acquisition Data Challenge

Tim also touched on a scenario that is painfully familiar to anyone in multifamily growth mode: acquiring a property and inheriting chaos. Boxes of files. Disorganized records. Scattered paperwork that someone has to manually work through to understand what they've bought.

Organizations that have invested in smart data organization — structured, searchable, and well-documented — have a compounding advantage in this scenario. Due diligence is faster. Integration is cheaper. The asset starts performing better sooner. Conversely, organizations that have neglected data organization are paying a tax on every acquisition they make.

High-quality, well-structured, consistently documented data is the prerequisite for reliable AI. Tim observed that every organization has people who care deeply about how data is organized and documented — and those people are currently undervalued. In the AI era, they’re becoming strategically critical. Identify who in your organization has a natural affinity for data structure and documentation. Invest in building or partnering with a function that treats data as a living, curated asset.

The Cost of Waiting: Why Your AI Journey Needs to Start Now

The final lesson is the one Tim drove home most urgently: the organizations that are learning, experimenting, and deploying AI right now are building a compounding advantage. And it doesn't compound linearly.

The Compounding Calculation

If your competitor started deploying AI 12 months before you, they don’t just have 12 months of experience. They have 12 months of compounded learning, process refinement, staff development, and data quality improvement. Each month of delay makes catching up harder — not linearly, but exponentially.

The instinct that many leaders have — “I'll wait until it's more settled, until there's a clear winner, until the technology is more mature” — feels prudent. Tim's argument is that this instinct is exactly backwards in the context of AI, because the value of early adoption isn't just in what you deploy. It's in what you learn.

“What you learn this year compared with what you learn next year will put you not a year ahead of everyone else — it will put you way ahead of everyone else. I don’t want to be a late adopter in this cycle.” — Tim Brewer | CEO & Co-Founder, Functionly

The Seven-Year Timeline

Tim offered a specific timeframe: the full AI revolution is likely to play out over approximately seven years. As of March 2026, we are roughly halfway through that window. The organizations that started early have three and a half years of learning compounding for them. The organizations that wait another two years to start will be entering a market where their competitors have five years of accumulated AI capability.

The analogy to the Industrial Revolution is instructive: that transition took about 70 years from first spark to full landing. The AI transition is moving roughly ten times faster. The window to be an early mover is still open — but it’s narrowing.

“For those of you who haven’t started yet, the time to start was yesterday. You don’t have to do it all yourself — picking the right partnerships and finding the right vendors that are already thinking around the corner is a great way to leapfrog where you are.” — Tim Brewer | CEO & Co-Founder, Functionly

Picking the Right Partners

Leverage was Tim's concluding theme: this is not a journey you must navigate alone. For those operating in the multifamily sector, the choice of vendors and platforms is critical; they will either act as a catalyst for your AI development or leave the entire burden of implementation on your shoulders.

Companies that have invested heavily in AI — constructing robust data frameworks, complex integration logic, and automated workflows — offer a proven path forward. Rather than recreating existing solutions, you should align with forward-thinking partners whose innovation and product roadmap can directly propel your own strategic goals.

The Closing Thought

“The answer to what’s coming in the AI era is not what the big companies are doing. It’s how we lead change in our organizations and how we approach AI. You don’t have to feel a sense of chaos. Leading change well — in your team, your culture, your data — is within your control.”

— Tim Brewer, CEO & Co-Founder of Functionly

Lauren Seagren is the Content Marketing Specialist at HappyCo, where she leads the company’s content strategy and storytelling across channels. She develops and optimizes campaigns, blogs, case studies, and enablement materials, while building the systems that help content scale and align across teams. Prior to HappyCo, Lauren led content and brand strategy across SaaS startups, creative agencies, and growth-stage companies, bringing more than a decade of experience driving measurable growth across B2B and B2C organizations.